Reasoning and reliability in AI | MIT News

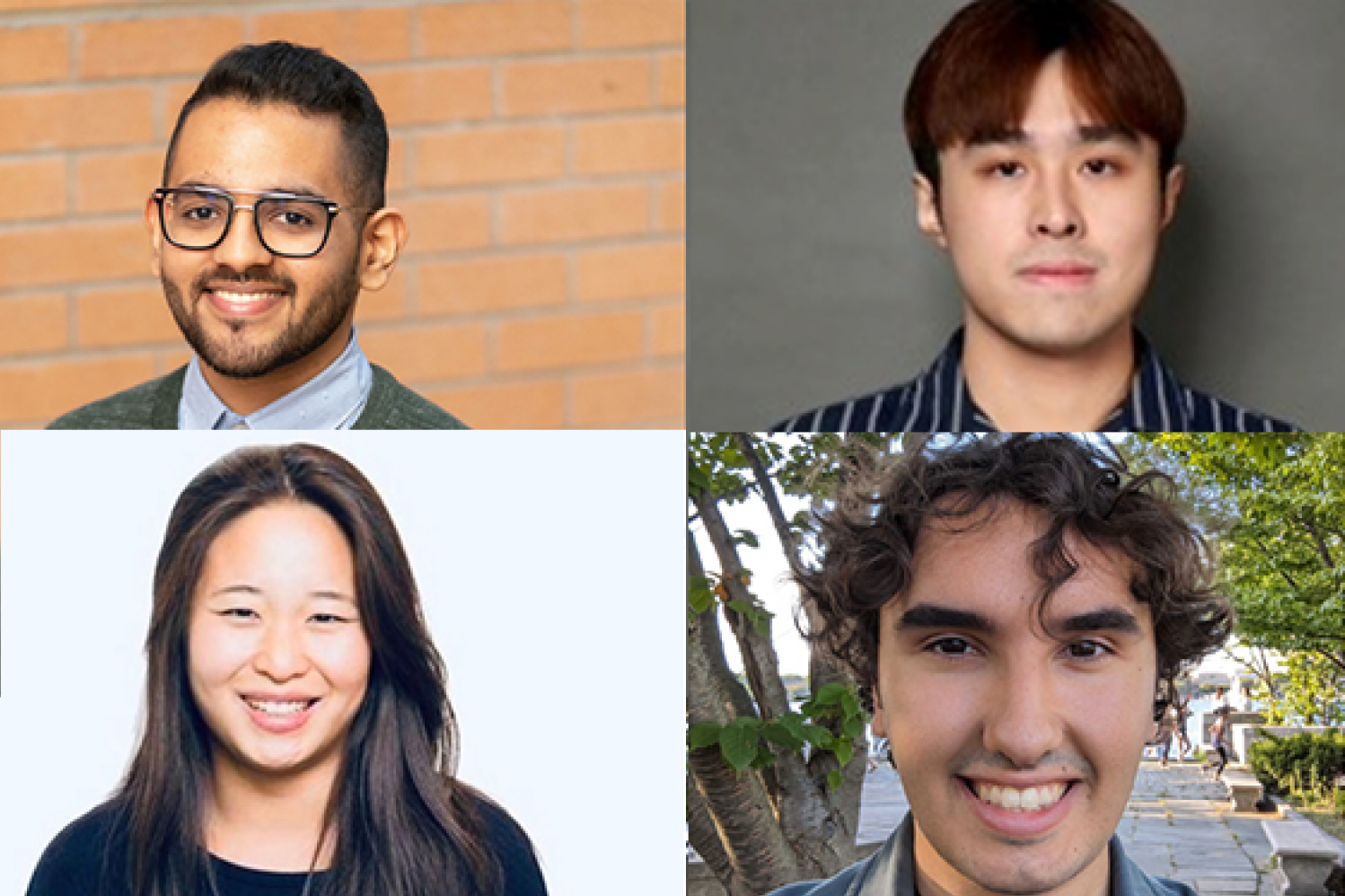

In order for natural language to be an effective form of communication, the parties involved need to be able to understand words and their context, assume that the content is largely shared in good faith and is trustworthy, reason about the information being shared, and then apply it to real-world scenarios. MIT PhD students interning with the MIT-IBM Watson AI Lab — Athul Paul Jacob SM ’22, Maohao Shen SM ’23, Victor Butoi, and Andi Peng SM ’23 — are working to attack each step of this process that’s baked into natural language models, so that the AI systems can be more dependable and accurate for users.

To achieve this, Jacob’s research strikes at the heart of existing natural language models to improve the output, using game theory. His interests, he says, are two-fold: “One is understanding how humans behave, using the lens of multi-agent systems and language understanding, and the second thing is, ‘How do you use that as an insight to build better AI systems?’” His work stems from the board game “Diplomacy,” where his research team developed a system that could learn and predict human behaviors and negotiate strategically to achieve a desired, optimal outcome.

“This was a game where you need to build trust; you need to communicate using language. You need to also play against six other players at the same time, which were very different from all the kinds of task domains people were tackling in the past,” says Jacob, referring to other games like poker and GO that researchers put to neural networks. “In doing so, there were a lot of research challenges. One was, ‘How do you model humans? How do you know whether when humans tend to act irrationally?’” Jacob and his research mentors — including Associate Professor Jacob Andreas and Assistant Professor Gabriele Farina of the MIT Department of Electrical Engineering and Computer Science (EECS), and the MIT-IBM Watson AI Lab’s Yikang Shen — recast the problem of language generation as a two-player game.

Using “generator” and “discriminator” models, Jacob’s team developed a natural language system to produce answers to questions and then observe the answers and determine if they are correct. If they are, the AI system receives a point; if not, no point is rewarded. Language models notoriously tend to hallucinate, making them less trustworthy; this no-regret learning algorithm collaboratively takes a natural language model and encourages the system’s answers to be more truthful and reliable, while keeping the solutions close to the pre-trained language model’s priors. Jacob says that using this technique in conjunction with a smaller language model could, likely, make it competitive with the same performance of a model many times bigger.

Once a language model generates a result, researchers ideally want its confidence in its generation to align with its accuracy, but this frequently isn’t the case. Hallucinations can occur with the model reporting high confidence when it should be low. Maohao Shen and his group, with mentors Gregory Wornell, Sumitomo Professor of Engineering in EECS, and lab researchers with IBM Research Subhro Das, Prasanna Sattigeri, and Soumya Ghosh — are looking to fix this through uncertainty quantification (UQ). “Our project aims to calibrate language models when they are poorly calibrated,” says Shen. Specifically, they’re looking at the classification problem. For this, Shen allows a language model to generate free text, which is then converted into a multiple-choice classification task. For instance, they might ask the model to solve a math problem and then ask it if the answer it generated is correct as “yes, no, or maybe.” This helps to determine if the model is over- or under-confident.

Automating this, the team developed a technique that helps tune the confidence output by a pre-trained language model. The researchers trained an auxiliary model using the ground-truth information in order for their system to be able to correct the language model. “If your model is over-confident in its prediction, we are able to detect it and make it less confident, and vice versa,” explains Shen. The team evaluated their technique on multiple popular benchmark datasets to show how well it generalizes to unseen tasks to realign the accuracy and confidence of language model predictions. “After training, you can just plug in and apply this technique to new tasks without any other supervision,” says Shen. “The only thing you need is the data for that new task.”

Victor Butoi also enhances model capability, but instead, his lab team — which includes John Guttag, the Dugald C. Jackson Professor of Computer Science and Electrical Engineering in EECS; lab researchers Leonid Karlinsky and Rogerio Feris of IBM Research; and lab affiliates Hilde Kühne of the University of Bonn and Wei Lin of Graz University of Technology — is creating techniques to allow vision-language models to reason about what they’re seeing, and is designing prompts to unlock new learning abilities and understand key phrases.

Compositional reasoning is just another aspect of the decision-making process that we ask machine-learning models to perform in order for them to be helpful in real-world situations, explains Butoi. “You need to be able to think about problems compositionally and solve subtasks,” says Butoi, “like, if you’re saying the chair is to the left of the person, you need to recognize both the chair and the person. You need to understand directions.” And then once the model understands “left,” the research team wants the model to be able to answer other questions involving “left.”

Surprisingly, vision-language models do not reason well about composition, Butoi explains, but they can be helped to, using a model that can “lead the witness”, if you will. The team developed a model that was tweaked using a technique called low-rank adaptation of large language models (LoRA) and trained on an annotated dataset called Visual Genome, which has objects in an image and arrows denoting relationships, like directions. In this case, the trained LoRA model would be guided to say something about “left” relationships, and this caption output would then be used to provide context and prompt the vision-language model, making it a “significantly easier task,” says Butoi.

In the world of robotics, AI systems also engage with their surroundings using computer vision and language. The settings may range from warehouses to the home. Andi Peng and mentors MIT’s H.N. Slater Professor in Aeronautics and Astronautics Julie Shah and Chuang Gan, of the lab and the University of Massachusetts at Amherst, are focusing on assisting people with physical constraints, using virtual worlds. For this, Peng’s group is developing two embodied AI models — a “human” that needs support and a helper agent — in a simulated environment called ThreeDWorld. Focusing on human/robot interactions, the team leverages semantic priors captured by large language models to aid the helper AI to infer what abilities the “human” agent might not be able to do and the motivation behind actions of the “human,” using natural language. The team’s looking to strengthen the helper’s sequential decision-making, bidirectional communication, ability to understand the physical scene, and how best to contribute.

“A lot of people think that AI programs should be autonomous, but I think that an important part of the process is that we build robots and systems for humans, and we want to convey human knowledge,” says Peng. “We don’t want a system to do something in a weird way; we want them to do it in a human way that we can understand.”