2023 proved to be a watershed moment for generative artificial intelligence (Gen AI).

A few months after OpenAI launched ChatGPT, McKinsey researchers revealed that one-third of companies were already using generative AI in at least one business function.

Exploring generative AI trends further, the analysts estimated that the technology’s annual economic impact could soon surpass $4.4 trillion, thanks in large part to its rapid advancement. For example, by the end of this decade, generative AI models are expected to reach the median level of human performance, which is 40 years faster than previously predicted.

With this overview of the most recent trends in generative AI, we are not attempting to simply describe the technology’s evolution in terms of cognition and content quality. The outputs of ChatGPT have already become much more accurate and detailed, as anyone using the program, with or without DALLE, has likely observed.

Instead, the ITRex generative AI company will envision the future of generative AI with regard to the technology’s implementation in enterprise settings and assess the potential impact of specific Gen AI trends on business operations.

Top 5 generative AI trends every visionary should know about

After reviewing multiple reports and consulting with our internal R&D department, the ITRex team identified the following generative AI trends for 2024:

- The rise of multimodal generative AI solutions

- The growing popularity of small language models (SLMs)

- The evolution of autonomous agents

- The closing performance gap between proprietary and open-source Gen AI models

- The emergence of task-specific generative AI products

Let us review these trends in generative AI one by one while evaluating their impact on your company’s processes and financial performance.

Generative AI trend #1: The rise of multimodal Gen AI solutions

One of the most important trends shaping the future of generative AI is the rise of multimodal Gen AI systems.

Multimodal Gen AI solutions can understand, synthesize, and transform data across various modes and formats, such as text, images, videos, and audio clips.

For example, a multifunctional generative AI system could take a written description and create an image, generate human speech from text, or even produce a video from a set of instructions.

Multimodal generative models employ advanced AI types and subsets, such as deep neural networks trained on large datasets with diverse data formats. This allows them to gain a contextual understanding of the data under analysis and confidently take over tasks that previously required human intervention, at least to some degree. Unsurprisingly, many experts consider such multi-tasking systems to be one of the primary generative AI trends for the near future.

The key characteristics of multimodal Gen AI systems span:

- The ability to interpret and connect information across different modalities – e.g., correlating text descriptions with relevant images or videos

- The ability to create new, original content that matches the patterns learned during model training

- The ability to adapt to various tasks and applications

- The ability to combine, manipulate, and generate data across different formats

Some examples of multimodal solutions shaping the future of generative AI in enterprise settings include OpenAI’s DALLE and CLIP, Google’s Imagen, and Microsoft-curated Large Language and Visual Assistant (LLaVA).

Impact

While widespread multimodal AI adoption may be a few years away, it is already redefining the knowledge economy, from personalizing treatment plans to combating fraud in banking.

Furthermore, generative AI solutions with multimodal capabilities will eliminate the need to buy or develop standalone AI applications for each task. This will allow businesses to cut IT costs and better integrate technology systems and departments.

The success of multimodal Gen AI systems largely depends on the availability and quality of training data, mature enterprise AI governance models, and effective usage of computing resources. Despite this, the rise of such generative AI solutions appears inevitable.

Generative AI trend #2: Greater adoption of small language models (SLMs)

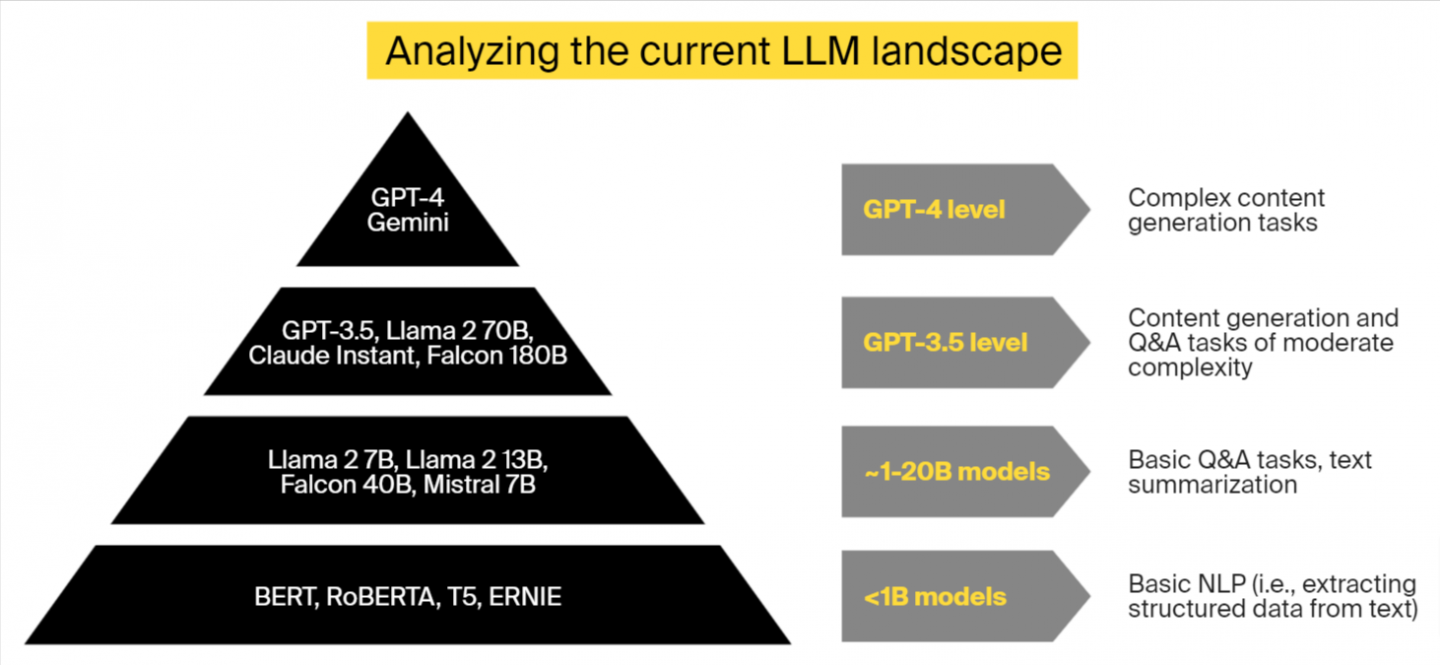

Small language models, which are essentially scaled-down versions of large language models (LLMs), such as OpenAI’s ChatGPT, will be a defining generative AI trend in 2024.

SLMs excel in a variety of natural language processing (NLP) tasks, including basic question answering and text classification, despite being trained on fewer parameters and using far fewer resources than their larger counterparts.

Their powers stem from the quality of training data they’ve consumed. Unlike large language models, whose training datasets frequently include a lot of noisy internet content of questionable quality, small models rely on curated, fine-tuned information from research papers, journals, and textbooks.

As a result, solutions like DistilBERT, a toned-down version of Google’s BERT developed by HuggingFace, can keep up to 95% of their source model’s capabilities while being 60% smaller and 40% faster.

Impact

This year, we anticipate more businesses will start using and refining small language models for specific, narrowly defined tasks while making sure to comply with industry-specific regulations.

The success of small language models could be crucial for the future of generative AI, inspiring more companies to make it part of their technology stack.

The impact of this generative AI trend will be visible across several areas, including:

- Lower computational costs. SLMs require fewer computational resources for training and deployment, which results in lower operational costs for businesses. For instance, Microsoft’s Phi-2 model has 2.7 billion parameters. Despite its small size compared to larger models (up to 25 times larger), it performs admirably in a variety of benchmarks, including reasoning and language understanding. Training Phi-2 took 14 days using 96 A100 GPUs, a setup that shows significant but much lower resource usage compared to models with hundreds of billions of parameters. The cost optimization factor will almost certainly contribute to the rise of generative AI in the enterprise world.

- Reduced environmental impact. Generative AI tools for text synthesis have a relatively low environmental impact. For example, processing 1,000 prompts consumes about 16% of the energy required to charge a smartphone. Creating the same amount of image or video-based content with a large Gen AI model is equivalent to driving 4.1 miles in a gas-powered vehicle. Companies that use smaller language models rather than multipurpose large ones can reduce IT infrastructure costs and align their operations with the growing corporate focus on sustainability and environmental responsibility, both of which are associated with generative AI trends.

- Building trust in generative AI. SLMs can produce more targeted and potentially more accurate outputs for specific domains or tasks because they rely on carefully selected and high-quality training data. This is especially useful in industries such as healthcare, finance, and legal services, where precision and dependability are essential. Higher accuracy of SLM outputs may help alleviate concerns about the future of generative AI in business. Only 9% of organizations currently use the technology extensively, due in part to ongoing model hallucinations and other Gen AI implementation challenges.

- Innovative business models. As SLMs become more common, new business models centered on developing, customizing, and deploying these models for specific industry requirements may emerge (more on that later).

Generative AI trend #3: The evolution of autonomous agents

Another Gen AI trend to watch out for is the rise of autonomous agents.

The term is used to describe AI models that perform tasks, make decisions, and produce content without human intervention. This is in stark contrast to current Gen AI solutions, which require carefully crafted prompts to deliver high-quality, accurate outputs.

While training such autonomous agents, a generative AI development company can use various techniques, from reinforcement learning to supervised and unsupervised learning.

Without cutting-edge tools to support their development, however, autonomous agents would not have made it onto our list of Gen AI trends.

Among these instruments is LangChain, an open-source framework that allows developers to link multiple prompts into chains, empowering algorithms to perform more complex tasks. Another solution is LLamaIndex, a data framework for language models that allows autonomous agents to interact with external data sources, giving them a form of memory.

Impact

While powerful and effective, today’s generative AI solutions rely heavily on prompting, resulting in the creation of a separate job – a prompt engineer and librarian. Such specialists are in high demand, earning as much as $335,000 per year. Without carefully crafted prompts, even the most advanced Gen AI tools can produce irrelevant content or miss important patterns in corporate data when performing analytics tasks. This undoubtedly has a lasting negative impact on the generative AI future.

Another drawback is hallucination – a phenomenon where Gen AI models produce plausible but incorrect answers. Up to 89% of AI experts who work with generative AI report that their models frequently display hallucinations, and 77% of generative AI users have already experienced hallucinations that have led them astray.

Autonomous agents, which are powered by “prompt chaining” and access to verified data sources, open up new possibilities for generative AI applications in business while lowering IT costs and freeing up employees’ time for strategic work. This could be one of the most important factors shaping the future of generative AI, as well as the key to end-to-end intelligent process automation.

Generative AI trend #4: Closing gap between proprietary and open-source Gen AI models’ performance

One of the most significant generative AI trends is the narrowing performance gap between commercially available and open-source Gen AI models. Here’s the context.

When it comes to implementing generative AI, enterprises’ options are typically limited to two:

- Using proprietary Gen AI solutions, such as OpenAI’s ChatGPT, as-is or retraining them on specific data

- Opting for an open-source model like GPT-2 or GPT-Neo, which can be used without modification or retrained on your data

Each approach has advantages and disadvantages, particularly in terms of generative AI costs, and may influence the future of generative AI in the business world.

For example, pre-trained commercially available Gen AI solutions have a whole back-end architecture, eliminating the need to purchase physical or cloud servers. Furthermore, Gen AI companies provide APIs for integrating their technologies with third-party apps, as well as extensive documentation and customer support.

However, the usage of proprietary Gen AI models may result in high infrastructure expenditures, reducing the return on your technological investment. There is also a risk of vendor lock-in, which occurs when your company becomes overly reliant on a third-party entity to complete mission-critical activities.

Open-source models, on the other hand, offer greater flexibility in terms of customization and infrastructure setup. However, they are typically trained on a smaller number of parameters, which results in more modest cognitive capabilities.

Through 2024 and beyond, we will see more open Gen AI projects match the performance of proprietary models – this will be one of the key generative AI trends.

The first signs are there already.

Last month, Google unveiled Gemma, an open-source version of its Gemini generative AI model. Unlike its older sister, Gemma is suitable for text-based tasks only and, at least for now, operates exclusively with English-language texts. However, independent research suggests the new open-source tool performs strongly in multiple scenarios, surpassing other open large language models, such as LLaMA 2 and Mistral 7B.

Other examples of powerful open-source Gen AI models include the aforementioned GPT-Neo, which excels in automated content generation and data analytics; Bionic GPT, which is highly suitable for intelligent chatbot development; and Falcon 180, which confidently handles text classification, sentiment analysis, and translation tasks.

Impact

Open-source Gen AI model developments will eventually lower the barrier to AI adoption for medium-sized and small firms while also assisting organizations in meeting their Gen AI integration, customization, scalability, and IT budget objectives.

Specifically, ITRex anticipates this generative AI trend to have the following impact on businesses:

- AI democratization. As open-source models begin to compete with proprietary models in terms of performance, smaller organizations and startups will enjoy unprecedented access to high-quality AI tools for a fraction of the cost of commercial alternatives.

- Cost reduction. By leveraging sophisticated open-source models without incurring hefty license fees or infrastructure expenditures, businesses may expect to significantly reduce their total cost of ownership for AI projects. This generative AI trend can also increase the return on investment (ROI) for AI initiatives, making it easier for businesses to integrate artificial intelligence into their operations and products.

- Risk mitigation. The proliferation of high-performance open-source alternatives provides businesses with more options, reducing the risk of vendor lock-in and increasing the autonomy of their technological infrastructure.

- Transparency and compliance. Open-source models, which provide greater transparency in their development process and decision-making logic, could be a key driver for generative AI future enterprise applications. Companies using such models will be able to review and validate their codebase to ensure that it meets regulatory standards, data protection legislation, and ethical guidelines.

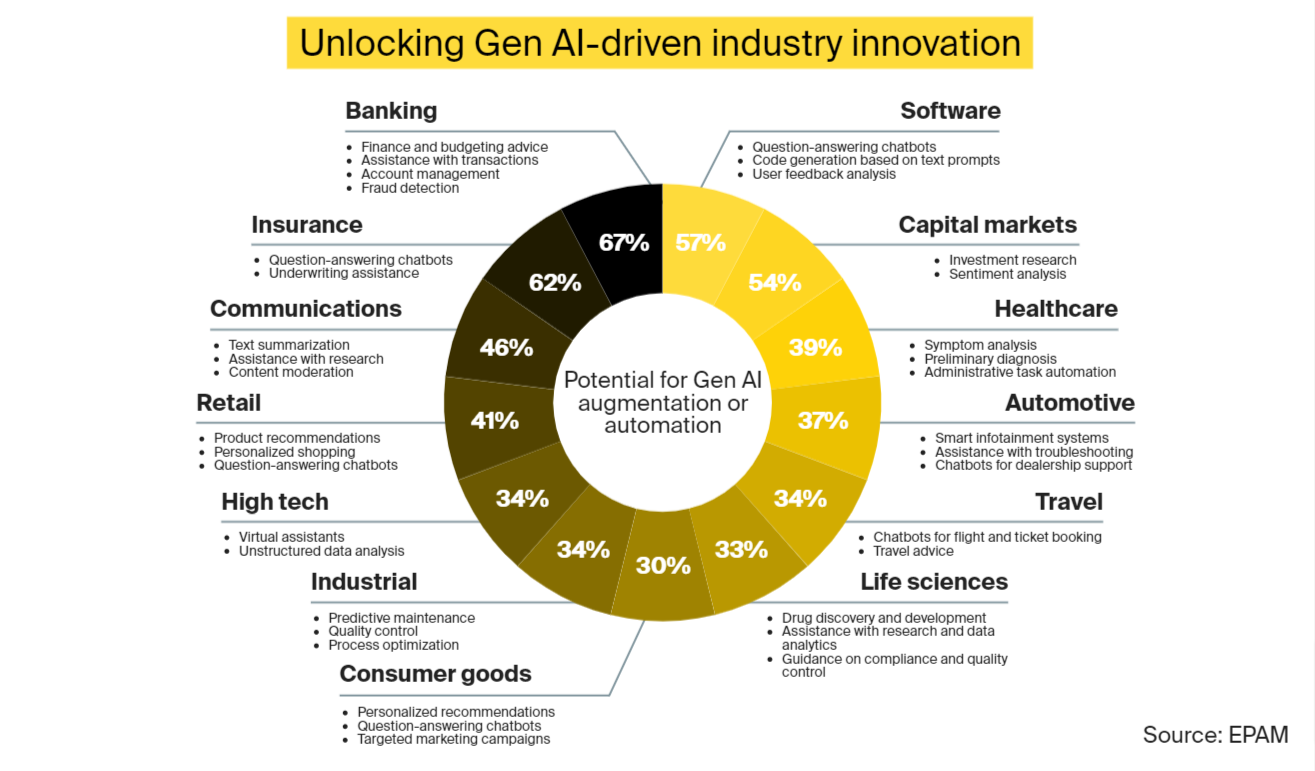

Generative AI trend #5: The emergence of Gen AI solutions tailored to specific business needs

The future of enterprise generative AI depends heavily on the evolution of the application tier and the vertical software that comprises it.

We are currently witnessing rapid advances in Gen AI applications that serve as an add-on to existing software systems. Despite being effective, the capabilities of the source models largely limit the cognitive and automation potential of these solutions.

The creation of innovative, Gen AI-native applications that address particular business needs could unlock a lot more value, particularly in sectors where artificial intelligence hasn’t had much of an impact so far. Among the generative AI trends discussed in this article, this one may be especially important for businesses considering Gen AI.

Consider business and financial operations as an example. MCSI estimates that 35% of the sector’s tasks have a high potential for automation using AI. However, while up to 88% of financial institutions are experimenting with AI, company-wide deployments remain rare.

By incorporating generative AI into their technology stack, business development and financial services professionals can improve or completely automate tasks such as customer support, underwriting, and market analysis. Gen AI systems can also produce synthetic data to improve the performance of financial models.

This generative AI trend has not gone unnoticed by companies with access to extensive financial data. Bloomberg recently introduced BloombergGPT, a language model with 50 billion parameters designed specifically for finance. It can confidently handle tasks such as question answering and sentiment analysis. Another example is JP Morgan Chase’s IndexGPT, which uses generative AI to provide informed investment recommendations.

While organizations with data moats – a competitive advantage companies gain by accumulating large amounts of unique data – are currently at the forefront of this disruption, smaller businesses could venture into developing industry and task-specific applications, too, defining the future of generative AI.

According to CB Insights, technology companies working on such applications raised $800 million in funding between Q4 2022 and Q3 2023, closing more deals than startups in the generative AI infrastructure segment. Kasisto, a FinTech company that runs KAI-GPT, a large language model for banking and finance, is an example of such startup-led innovation.

Impact

Vertical and task-specific software solutions could become one of the crucial generative AI trends for the coming years, impacting the enterprise segment in multiple ways:

- Maximized value. Industry-specific Gen AI models outperform general-purpose models thanks to their ability to handle specialized tasks with greater precision and context understanding. They excel at accuracy, particularly in complex fields such as healthcare and finance, because they understand the specific terminologies and requirements of those domains. Furthermore, such models are adaptable and capable of providing tailored experiences. As AI pioneers, we will continue to monitor the latest trends in generative AI, with a focus on task-specific models and their performance in narrowly defined tasks.

- Increased innovation. Tailored Gen AI applications enable businesses to experiment with new products and services, fostering innovation in industries that were previously untapped for AI’s full potential. For example, Insilico Medicine, a Hong Kong company that uses genomics, big data, and deep learning for drug discovery, was able to reduce the drug discovery and development process to just three years, compared to the pharmaceutical industry’s standard 10-15 year benchmark. Their novel treatment for chronic lung disease was developed using generative AI, which accurately predicted how drug compounds would interact within the human body.

- Lower entry barrier. Although initially led by data behemoths, the generative AI trend highlighted in the previous section may pave the way for smaller entities to adopt and customize Gen AI solutions, democratizing access to advanced AI technologies.

A glimpse into the future of generative AI: summing it up

Generative AI represents a major disruption similar to the emergence of the internet or smartphones. It could affect up to 80% of the workforce to varying degrees and potentially automate 300 million full-time jobs worldwide while boosting annual global GDP by up to 7%.

While the technology is not expected to have a significant impact on enterprise IT budgets until 2025, C-level executives from all industries should stay up to date on the latest generative AI trends in order to identify and capitalize on emerging opportunities.

Feel free to contact ITRex to learn how Gen AI may affect your business. We can talk about specific use cases, test your assumptions with proof of concept (PoC), and create a successful generative AI strategy.

The post Generative AI Trends for 2024: Measuring the Impact appeared first on Datafloq.